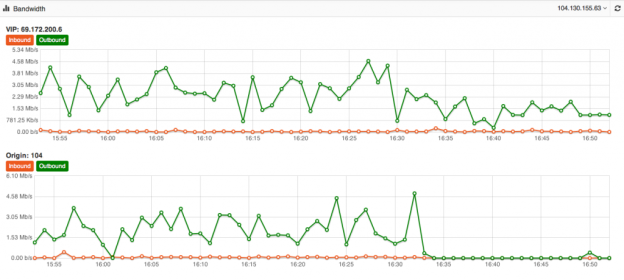

Its one of the biggest mysteries to me I have seen in my 15+ years of Internet hosting and cloud based services. The mystery is, why do people use a Content Delivery Network for their website yet never fully optimize their site to take advantage of the speed and volume capabilities of the CDN. Just because you use a CDN doesn’t mean your site is automatically faster or even able to take advantage of its ability to dish out mass amounts of content in the blink of an eye. At DOSarrest I have seen the same mystery continue, this is why I have put together this piece on using a CDN and hopefully help those who wish to take full advantage of a CDN. Most of this information is general and can be applied to using any CDN but I’ll also throw in some specifics that relate to DOSarrest. Some common misconceptions about using a CDN As soon as I’m configured to use a CDN my site will be faster and be able to handle a large amount of web visitors on demand. Website developers create websites that are already optimized and a CDN won’t really change much. There’s really nothing I can do to make my website run faster once its on a CDN. All CDN’s are pretty much the same. Here’s what I have to say about the misconceptions noted above In most cases the answer to this is…. NO !! If the CDN is not caching your content your site won’t be faster, in fact it will probably be a little slower, as every request will have to go from the visitor to the CDN which will in turn go and fetch it from your server then turn around and send the response back to the visitor. In my opinion and experience website developers in general do not optimize websites to use a CDN. In fact most websites don’t even take full advantage of a browsers’ caching capability. As the Internet has become ubiquitously faster, this fine art has been left by the wayside in most cases. Another reason I think this has happened is that websites are huge, complex and a lot of content is dynamically generated coupled with very fast servers with large amounts of memory. Why spend time on optimizing caching, when a fast server will overcome this overhead. Oh yes you can and that’s why I have written this piece…see below No they aren’t. Many CDN’s don’t want you know how things are really working from every node that they are broadcasting your content from. You have to go out and subscribe to a third party service, if you have to get a third party service, do it, it can be fairly expensive but well worth it. How else will you know how your site is performing from other geographic regions. A good CDN should let you know the following in real-time but many don’t. Number of connections/requests between the CDN and Visitors. Number of connections/requests between the CDN and your server (origin). You want try and have the number of requests to your server to be less than the number of requests from the CDN to your visitors. *Tip- Use HTTP 1.1 on both “a” & “b” above and try and extend the keep-alive time on the origin to CDN side Bandwidth between the CDN and Internet visitors Bandwidth between the CDN and your server (origin) *Tip – If bandwidth of “c” and “d” are about the same, news flash…You can make things better. Cache status of your content (how many requests are being served by the CDN) *Tip – This is the best metric to really know if you are using your CDN properly. Performance metrics from outside of the CDN but in the same geographic region *Tip- Once you have the performance metrics from several different geographic regions you can compare the differences once you are on a CDN, your site should load faster the further away the region is located from your origin server, if you’re caching properly. For the record DOSarrest provides all of the above in real-time and it’s these tools I’ll use to explain on how to take full advantage of any CDN but without any metrics there’s no scientific way to know you’re on the right track to making your site super fast. There are five main groups of cache control tags that will effect how and what is cached. Expires : When attempting to retrieve a resource a browser will usually check to see if it already has a copy available for reuse. If the expires date has past the browser will download the resource again. Cache-control : HTTP 1.1 this expands on the functionality offered by Expires. There are several options available for the cache control header: – Public : This resource is cacheable. In the absence of any contradicting directive this is assumed. – Private : This resource is cachable by the end user only. All intermediate caching devices will treat this resource as no-cache. – No-cache : Do not cache this resource. – No-store : Do not cache, Do not store the request, I was never here – we never spoke. Capiche? – Must-revalidate : Do not use stale copies of this resource. – Proxy-revalidate : The end user may use stale copies, but intermediate caches must revalidate. – Max-age : The length of time (in seconds) before a resource is considered stale. A response may include any combination of these headers, for example: private, max-age=3600, must-revalidate. X-Accel-Expires : This functions just like the Expires header, but is only intended for proxy services. This header is intended to be ignored by browsers, and when the response traverses a proxy this header should be stripped out. Set-Cookie : While not explicitly specifying a cache directive, cookies are generally designed to hold user and/or session specific information. Caching such resources would have a negative impact on the desired site functionality. Vary : Lists the headers that should determine distinct copies of the resource. Cache will need to keep a separate copy of this resource for each distinct set of values in the headers indicated by Vary. A Vary response of “ * “ indicates that each request is unique. Given that most websites in my opinion are not fully taking advantage of caching by a browser or a CDN, if you’re using one, there is still a way around this without reviewing and adjusting every cache control header on your website. Any CDN worth its cost as well as any cloud based DDoS protection services company should be able to override most website cache-control headers. For demonstration purposes we used our own live website DOSarrest.com and ran a traffic generator so as to stress the server a little along with our regular visitor traffic. This demonstration shows what’s going on, when passing through a CDN with respect to activity between the CDN and the Internet visitor and the CDN and the customers server on the back-end. At approximately 16:30 we enabled a feature on DOSarrest’s service we call “Forced Caching” What this does is override in other words ignore some of the origin servers cache control headers. These are the results: Notice that bandwidth between the CDN and the origin (second graph) have fallen by over 90%, this saves resources on the origin server and makes things faster for the visitor. This is the best graphic illustration to let you know that you’re on the right track. Cache hits go way up, not cached go down and Expired and misses are negligible. The graph below shows that the requests to the origin have dropped by 90% ,its telling you the CDN is doing the heavy lifting. Last but not least this is the fruit of your labor as seen by 8 sensors in 4 geographic regions from our Customer “ DEMS “ portal. The site is running 10 times faster in every location even under load !

Its one of the biggest mysteries to me I have seen in my 15+ years of Internet hosting and cloud based services. The mystery is, why do people use a Content Delivery Network for their website yet never fully optimize their site to take advantage of the speed and volume capabilities of the CDN. Just because you use a CDN doesn’t mean your site is automatically faster or even able to take advantage of its ability to dish out mass amounts of content in the blink of an eye. At DOSarrest I have seen the same mystery continue, this is why I have put together this piece on using a CDN and hopefully help those who wish to take full advantage of a CDN. Most of this information is general and can be applied to using any CDN but I’ll also throw in some specifics that relate to DOSarrest. Some common misconceptions about using a CDN As soon as I’m configured to use a CDN my site will be faster and be able to handle a large amount of web visitors on demand. Website developers create websites that are already optimized and a CDN won’t really change much. There’s really nothing I can do to make my website run faster once its on a CDN. All CDN’s are pretty much the same. Here’s what I have to say about the misconceptions noted above In most cases the answer to this is…. NO !! If the CDN is not caching your content your site won’t be faster, in fact it will probably be a little slower, as every request will have to go from the visitor to the CDN which will in turn go and fetch it from your server then turn around and send the response back to the visitor. In my opinion and experience website developers in general do not optimize websites to use a CDN. In fact most websites don’t even take full advantage of a browsers’ caching capability. As the Internet has become ubiquitously faster, this fine art has been left by the wayside in most cases. Another reason I think this has happened is that websites are huge, complex and a lot of content is dynamically generated coupled with very fast servers with large amounts of memory. Why spend time on optimizing caching, when a fast server will overcome this overhead. Oh yes you can and that’s why I have written this piece…see below No they aren’t. Many CDN’s don’t want you know how things are really working from every node that they are broadcasting your content from. You have to go out and subscribe to a third party service, if you have to get a third party service, do it, it can be fairly expensive but well worth it. How else will you know how your site is performing from other geographic regions. A good CDN should let you know the following in real-time but many don’t. Number of connections/requests between the CDN and Visitors. Number of connections/requests between the CDN and your server (origin). You want try and have the number of requests to your server to be less than the number of requests from the CDN to your visitors. *Tip- Use HTTP 1.1 on both “a” & “b” above and try and extend the keep-alive time on the origin to CDN side Bandwidth between the CDN and Internet visitors Bandwidth between the CDN and your server (origin) *Tip – If bandwidth of “c” and “d” are about the same, news flash…You can make things better. Cache status of your content (how many requests are being served by the CDN) *Tip – This is the best metric to really know if you are using your CDN properly. Performance metrics from outside of the CDN but in the same geographic region *Tip- Once you have the performance metrics from several different geographic regions you can compare the differences once you are on a CDN, your site should load faster the further away the region is located from your origin server, if you’re caching properly. For the record DOSarrest provides all of the above in real-time and it’s these tools I’ll use to explain on how to take full advantage of any CDN but without any metrics there’s no scientific way to know you’re on the right track to making your site super fast. There are five main groups of cache control tags that will effect how and what is cached. Expires : When attempting to retrieve a resource a browser will usually check to see if it already has a copy available for reuse. If the expires date has past the browser will download the resource again. Cache-control : HTTP 1.1 this expands on the functionality offered by Expires. There are several options available for the cache control header: – Public : This resource is cacheable. In the absence of any contradicting directive this is assumed. – Private : This resource is cachable by the end user only. All intermediate caching devices will treat this resource as no-cache. – No-cache : Do not cache this resource. – No-store : Do not cache, Do not store the request, I was never here – we never spoke. Capiche? – Must-revalidate : Do not use stale copies of this resource. – Proxy-revalidate : The end user may use stale copies, but intermediate caches must revalidate. – Max-age : The length of time (in seconds) before a resource is considered stale. A response may include any combination of these headers, for example: private, max-age=3600, must-revalidate. X-Accel-Expires : This functions just like the Expires header, but is only intended for proxy services. This header is intended to be ignored by browsers, and when the response traverses a proxy this header should be stripped out. Set-Cookie : While not explicitly specifying a cache directive, cookies are generally designed to hold user and/or session specific information. Caching such resources would have a negative impact on the desired site functionality. Vary : Lists the headers that should determine distinct copies of the resource. Cache will need to keep a separate copy of this resource for each distinct set of values in the headers indicated by Vary. A Vary response of “ * “ indicates that each request is unique. Given that most websites in my opinion are not fully taking advantage of caching by a browser or a CDN, if you’re using one, there is still a way around this without reviewing and adjusting every cache control header on your website. Any CDN worth its cost as well as any cloud based DDoS protection services company should be able to override most website cache-control headers. For demonstration purposes we used our own live website DOSarrest.com and ran a traffic generator so as to stress the server a little along with our regular visitor traffic. This demonstration shows what’s going on, when passing through a CDN with respect to activity between the CDN and the Internet visitor and the CDN and the customers server on the back-end. At approximately 16:30 we enabled a feature on DOSarrest’s service we call “Forced Caching” What this does is override in other words ignore some of the origin servers cache control headers. These are the results: Notice that bandwidth between the CDN and the origin (second graph) have fallen by over 90%, this saves resources on the origin server and makes things faster for the visitor. This is the best graphic illustration to let you know that you’re on the right track. Cache hits go way up, not cached go down and Expired and misses are negligible. The graph below shows that the requests to the origin have dropped by 90% ,its telling you the CDN is doing the heavy lifting. Last but not least this is the fruit of your labor as seen by 8 sensors in 4 geographic regions from our Customer “ DEMS “ portal. The site is running 10 times faster in every location even under load !

Follow this link:

Featured article: How to use a CDN properly and make your website faster

Its one of the biggest mysteries to me I have seen in my 15+ years of Internet hosting and cloud based services. The mystery is, why do people use a Content Delivery Network for their website yet never fully optimize their site to take advantage of the speed and volume capabilities of the CDN. Just because you use a CDN doesn’t mean your site is automatically faster or even able to take advantage of its ability to dish out mass amounts of content in the blink of an eye. At DOSarrest I have seen the same mystery continue, this is why I have put together this piece on using a CDN and hopefully help those who wish to take full advantage of a CDN. Most of this information is general and can be applied to using any CDN but I’ll also throw in some specifics that relate to DOSarrest. Some common misconceptions about using a CDN As soon as I’m configured to use a CDN my site will be faster and be able to handle a large amount of web visitors on demand. Website developers create websites that are already optimized and a CDN won’t really change much. There’s really nothing I can do to make my website run faster once its on a CDN. All CDN’s are pretty much the same. Here’s what I have to say about the misconceptions noted above In most cases the answer to this is…. NO !! If the CDN is not caching your content your site won’t be faster, in fact it will probably be a little slower, as every request will have to go from the visitor to the CDN which will in turn go and fetch it from your server then turn around and send the response back to the visitor. In my opinion and experience website developers in general do not optimize websites to use a CDN. In fact most websites don’t even take full advantage of a browsers’ caching capability. As the Internet has become ubiquitously faster, this fine art has been left by the wayside in most cases. Another reason I think this has happened is that websites are huge, complex and a lot of content is dynamically generated coupled with very fast servers with large amounts of memory. Why spend time on optimizing caching, when a fast server will overcome this overhead. Oh yes you can and that’s why I have written this piece…see below No they aren’t. Many CDN’s don’t want you know how things are really working from every node that they are broadcasting your content from. You have to go out and subscribe to a third party service, if you have to get a third party service, do it, it can be fairly expensive but well worth it. How else will you know how your site is performing from other geographic regions. A good CDN should let you know the following in real-time but many don’t. Number of connections/requests between the CDN and Visitors. Number of connections/requests between the CDN and your server (origin). You want try and have the number of requests to your server to be less than the number of requests from the CDN to your visitors. *Tip- Use HTTP 1.1 on both “a” & “b” above and try and extend the keep-alive time on the origin to CDN side Bandwidth between the CDN and Internet visitors Bandwidth between the CDN and your server (origin) *Tip – If bandwidth of “c” and “d” are about the same, news flash…You can make things better. Cache status of your content (how many requests are being served by the CDN) *Tip – This is the best metric to really know if you are using your CDN properly. Performance metrics from outside of the CDN but in the same geographic region *Tip- Once you have the performance metrics from several different geographic regions you can compare the differences once you are on a CDN, your site should load faster the further away the region is located from your origin server, if you’re caching properly. For the record DOSarrest provides all of the above in real-time and it’s these tools I’ll use to explain on how to take full advantage of any CDN but without any metrics there’s no scientific way to know you’re on the right track to making your site super fast. There are five main groups of cache control tags that will effect how and what is cached. Expires : When attempting to retrieve a resource a browser will usually check to see if it already has a copy available for reuse. If the expires date has past the browser will download the resource again. Cache-control : HTTP 1.1 this expands on the functionality offered by Expires. There are several options available for the cache control header: – Public : This resource is cacheable. In the absence of any contradicting directive this is assumed. – Private : This resource is cachable by the end user only. All intermediate caching devices will treat this resource as no-cache. – No-cache : Do not cache this resource. – No-store : Do not cache, Do not store the request, I was never here – we never spoke. Capiche? – Must-revalidate : Do not use stale copies of this resource. – Proxy-revalidate : The end user may use stale copies, but intermediate caches must revalidate. – Max-age : The length of time (in seconds) before a resource is considered stale. A response may include any combination of these headers, for example: private, max-age=3600, must-revalidate. X-Accel-Expires : This functions just like the Expires header, but is only intended for proxy services. This header is intended to be ignored by browsers, and when the response traverses a proxy this header should be stripped out. Set-Cookie : While not explicitly specifying a cache directive, cookies are generally designed to hold user and/or session specific information. Caching such resources would have a negative impact on the desired site functionality. Vary : Lists the headers that should determine distinct copies of the resource. Cache will need to keep a separate copy of this resource for each distinct set of values in the headers indicated by Vary. A Vary response of “ * “ indicates that each request is unique. Given that most websites in my opinion are not fully taking advantage of caching by a browser or a CDN, if you’re using one, there is still a way around this without reviewing and adjusting every cache control header on your website. Any CDN worth its cost as well as any cloud based DDoS protection services company should be able to override most website cache-control headers. For demonstration purposes we used our own live website DOSarrest.com and ran a traffic generator so as to stress the server a little along with our regular visitor traffic. This demonstration shows what’s going on, when passing through a CDN with respect to activity between the CDN and the Internet visitor and the CDN and the customers server on the back-end. At approximately 16:30 we enabled a feature on DOSarrest’s service we call “Forced Caching” What this does is override in other words ignore some of the origin servers cache control headers. These are the results: Notice that bandwidth between the CDN and the origin (second graph) have fallen by over 90%, this saves resources on the origin server and makes things faster for the visitor. This is the best graphic illustration to let you know that you’re on the right track. Cache hits go way up, not cached go down and Expired and misses are negligible. The graph below shows that the requests to the origin have dropped by 90% ,its telling you the CDN is doing the heavy lifting. Last but not least this is the fruit of your labor as seen by 8 sensors in 4 geographic regions from our Customer “ DEMS “ portal. The site is running 10 times faster in every location even under load !

Its one of the biggest mysteries to me I have seen in my 15+ years of Internet hosting and cloud based services. The mystery is, why do people use a Content Delivery Network for their website yet never fully optimize their site to take advantage of the speed and volume capabilities of the CDN. Just because you use a CDN doesn’t mean your site is automatically faster or even able to take advantage of its ability to dish out mass amounts of content in the blink of an eye. At DOSarrest I have seen the same mystery continue, this is why I have put together this piece on using a CDN and hopefully help those who wish to take full advantage of a CDN. Most of this information is general and can be applied to using any CDN but I’ll also throw in some specifics that relate to DOSarrest. Some common misconceptions about using a CDN As soon as I’m configured to use a CDN my site will be faster and be able to handle a large amount of web visitors on demand. Website developers create websites that are already optimized and a CDN won’t really change much. There’s really nothing I can do to make my website run faster once its on a CDN. All CDN’s are pretty much the same. Here’s what I have to say about the misconceptions noted above In most cases the answer to this is…. NO !! If the CDN is not caching your content your site won’t be faster, in fact it will probably be a little slower, as every request will have to go from the visitor to the CDN which will in turn go and fetch it from your server then turn around and send the response back to the visitor. In my opinion and experience website developers in general do not optimize websites to use a CDN. In fact most websites don’t even take full advantage of a browsers’ caching capability. As the Internet has become ubiquitously faster, this fine art has been left by the wayside in most cases. Another reason I think this has happened is that websites are huge, complex and a lot of content is dynamically generated coupled with very fast servers with large amounts of memory. Why spend time on optimizing caching, when a fast server will overcome this overhead. Oh yes you can and that’s why I have written this piece…see below No they aren’t. Many CDN’s don’t want you know how things are really working from every node that they are broadcasting your content from. You have to go out and subscribe to a third party service, if you have to get a third party service, do it, it can be fairly expensive but well worth it. How else will you know how your site is performing from other geographic regions. A good CDN should let you know the following in real-time but many don’t. Number of connections/requests between the CDN and Visitors. Number of connections/requests between the CDN and your server (origin). You want try and have the number of requests to your server to be less than the number of requests from the CDN to your visitors. *Tip- Use HTTP 1.1 on both “a” & “b” above and try and extend the keep-alive time on the origin to CDN side Bandwidth between the CDN and Internet visitors Bandwidth between the CDN and your server (origin) *Tip – If bandwidth of “c” and “d” are about the same, news flash…You can make things better. Cache status of your content (how many requests are being served by the CDN) *Tip – This is the best metric to really know if you are using your CDN properly. Performance metrics from outside of the CDN but in the same geographic region *Tip- Once you have the performance metrics from several different geographic regions you can compare the differences once you are on a CDN, your site should load faster the further away the region is located from your origin server, if you’re caching properly. For the record DOSarrest provides all of the above in real-time and it’s these tools I’ll use to explain on how to take full advantage of any CDN but without any metrics there’s no scientific way to know you’re on the right track to making your site super fast. There are five main groups of cache control tags that will effect how and what is cached. Expires : When attempting to retrieve a resource a browser will usually check to see if it already has a copy available for reuse. If the expires date has past the browser will download the resource again. Cache-control : HTTP 1.1 this expands on the functionality offered by Expires. There are several options available for the cache control header: – Public : This resource is cacheable. In the absence of any contradicting directive this is assumed. – Private : This resource is cachable by the end user only. All intermediate caching devices will treat this resource as no-cache. – No-cache : Do not cache this resource. – No-store : Do not cache, Do not store the request, I was never here – we never spoke. Capiche? – Must-revalidate : Do not use stale copies of this resource. – Proxy-revalidate : The end user may use stale copies, but intermediate caches must revalidate. – Max-age : The length of time (in seconds) before a resource is considered stale. A response may include any combination of these headers, for example: private, max-age=3600, must-revalidate. X-Accel-Expires : This functions just like the Expires header, but is only intended for proxy services. This header is intended to be ignored by browsers, and when the response traverses a proxy this header should be stripped out. Set-Cookie : While not explicitly specifying a cache directive, cookies are generally designed to hold user and/or session specific information. Caching such resources would have a negative impact on the desired site functionality. Vary : Lists the headers that should determine distinct copies of the resource. Cache will need to keep a separate copy of this resource for each distinct set of values in the headers indicated by Vary. A Vary response of “ * “ indicates that each request is unique. Given that most websites in my opinion are not fully taking advantage of caching by a browser or a CDN, if you’re using one, there is still a way around this without reviewing and adjusting every cache control header on your website. Any CDN worth its cost as well as any cloud based DDoS protection services company should be able to override most website cache-control headers. For demonstration purposes we used our own live website DOSarrest.com and ran a traffic generator so as to stress the server a little along with our regular visitor traffic. This demonstration shows what’s going on, when passing through a CDN with respect to activity between the CDN and the Internet visitor and the CDN and the customers server on the back-end. At approximately 16:30 we enabled a feature on DOSarrest’s service we call “Forced Caching” What this does is override in other words ignore some of the origin servers cache control headers. These are the results: Notice that bandwidth between the CDN and the origin (second graph) have fallen by over 90%, this saves resources on the origin server and makes things faster for the visitor. This is the best graphic illustration to let you know that you’re on the right track. Cache hits go way up, not cached go down and Expired and misses are negligible. The graph below shows that the requests to the origin have dropped by 90% ,its telling you the CDN is doing the heavy lifting. Last but not least this is the fruit of your labor as seen by 8 sensors in 4 geographic regions from our Customer “ DEMS “ portal. The site is running 10 times faster in every location even under load !

Hacktivists have launched a distributed denial-of-service attack against the website of TMT (Thirty Meter Telescope), which is planned to be the Northern hemisphere’s largest, most advanced optical telescope. For at least two hours yesterday, the TMT website at www.tmt.org was inaccessible to internet users. Sandra Dawson, a spokesperson for the TMT project, confirmed to the Associated Press that the site had come under attack: “TMT today was the victim of an unscrupulous denial of service attack, apparently launched by Anonymous. The incident is being investigated.” You might think that a website about a telescope is a strange target for hackers wielding the blunt weapon of a DDoS attack, who might typically be more interested in attacking government websites for political reasons or taking down an unpopular multinational corporation. Why would hackers want to launch such a disruptive attack against a telescope website? Surely the only people who don’t like telescopes are the aliens in outer space who might be having their laundry peeped at from Earth? It turns out there’s a simple reason why the Thirty Meter Telescope is stirring emotions so strongly: it hasn’t been built yet. The construction of the proposed TMT is controversial because it is planned to be be constructed on Mauna Kea, a dormant 13,796 foot-high volcano in Hawaii. This has incurred the wrath of environmentalists and native Hawaiians who consider the land to be sacred. There has been considerable opposition to the building of the telescope on Mauna Kea, as this news report from last year makes clear. Now it appears the protest about TMT has spilt over onto the internet in the form of a denial-of-service attack. Operation Green Rights, an Anonymous-affiliated group which also campaigns against controversial corporations such as Monsanto, claimed on its Twitter account and website that it was responsible for the DDoS attack. The hacktivists additionally claimed credit for taking down Aloha State’s official website. It is clear that denial-of-service attacks are being deployed more and more, as perpetrators attempt to use the anonymity of the internet to hide their identity and stage the digital version of a “sit down protest” or blockade to disrupt organisations. Tempting as it may be to participate in a DDoS attack, it’s important that everyone remembers that if the authorities determine you were involved you can end up going to jail as a result. Peaceful, law-abiding protests are always preferable. Source: http://www.welivesecurity.com/2015/04/27/tmt-website-ddos/

Hacktivists have launched a distributed denial-of-service attack against the website of TMT (Thirty Meter Telescope), which is planned to be the Northern hemisphere’s largest, most advanced optical telescope. For at least two hours yesterday, the TMT website at www.tmt.org was inaccessible to internet users. Sandra Dawson, a spokesperson for the TMT project, confirmed to the Associated Press that the site had come under attack: “TMT today was the victim of an unscrupulous denial of service attack, apparently launched by Anonymous. The incident is being investigated.” You might think that a website about a telescope is a strange target for hackers wielding the blunt weapon of a DDoS attack, who might typically be more interested in attacking government websites for political reasons or taking down an unpopular multinational corporation. Why would hackers want to launch such a disruptive attack against a telescope website? Surely the only people who don’t like telescopes are the aliens in outer space who might be having their laundry peeped at from Earth? It turns out there’s a simple reason why the Thirty Meter Telescope is stirring emotions so strongly: it hasn’t been built yet. The construction of the proposed TMT is controversial because it is planned to be be constructed on Mauna Kea, a dormant 13,796 foot-high volcano in Hawaii. This has incurred the wrath of environmentalists and native Hawaiians who consider the land to be sacred. There has been considerable opposition to the building of the telescope on Mauna Kea, as this news report from last year makes clear. Now it appears the protest about TMT has spilt over onto the internet in the form of a denial-of-service attack. Operation Green Rights, an Anonymous-affiliated group which also campaigns against controversial corporations such as Monsanto, claimed on its Twitter account and website that it was responsible for the DDoS attack. The hacktivists additionally claimed credit for taking down Aloha State’s official website. It is clear that denial-of-service attacks are being deployed more and more, as perpetrators attempt to use the anonymity of the internet to hide their identity and stage the digital version of a “sit down protest” or blockade to disrupt organisations. Tempting as it may be to participate in a DDoS attack, it’s important that everyone remembers that if the authorities determine you were involved you can end up going to jail as a result. Peaceful, law-abiding protests are always preferable. Source: http://www.welivesecurity.com/2015/04/27/tmt-website-ddos/